Impala → Snowflake migration

Move Impala workloads (SQL, Hive Metastore-backed tables, partitioned Parquet/ORC, UDFs, and orchestrated ETL/ELT) to Snowflake with predictable conversion and verified parity. SmartMigrate makes semantic and performance differences explicit, produces reconciliation evidence you can sign off on, and gates cutover with rollback-ready criteria—so production outcomes are backed by proof, not optimism.

- Scope

- Query and schema conversion

- Semantic and type alignment

- Validation and cutover readiness

- Risk areas

- What are the biggest differences between Impala and Snowflake SQL?

- How do you handle casting, NULL behavior, and timestamp edge cases?

- What happens to Hive Metastore tables and partition-based pipelines?

- Deliverables

- Prioritized execution plan

- Parity evidence and variance log

- Rollback-ready cutover criteria

Is this migration right for you?

- You run production analytics on Impala (CDH/CDP or equivalent), with hundreds/thousands of queries across BI, notebooks, and scheduled pipelines.

- Your lake tables are Hive Metastore-managed (external locations, partitions, schema evolution), and you need a clean replacement contract in Snowflake.

- You have incremental + late-arriving data patterns (partition overwrites, daily closes, backfills) where correctness must be provable.

- You use UDFs / custom SQL libraries (Java/C++ UDFs, string/date parsing helpers) that must be preserved intentionally.

- Your cutover requires objective sign-off (golden queries + thresholds, reconciliation evidence, rollback gates).

- You’re doing a one-time table copy with no semantic dependency on existing queries, KPIs, or pipelines.

- You can tolerate “close enough” outputs and don’t need reconciliation evidence.

- Your Impala usage is ad-hoc / exploratory only, with no operational SLAs

What breaks in an Impala → Snowflake migration

- What are the biggest differences between Impala and Snowflake SQL?

Impala is tightly coupled to the “lake + metastore” model and inherits many Hive-era behaviors, while Snowflake is a managed warehouse with different type coercion, function behavior, and execution patterns. Performance tuning shifts from partition/file layout and engine stats to micro-partition pruning, (selective) clustering, and warehouse sizing. Reliable migration requires handling semantics—not just syntax.

- How do you handle casting, NULL behavior, and timestamp edge cases?

We translate patterns, then validate with targeted edge-case datasets and golden queries where aggregates and joins are sensitive. We explicitly enforce type conversions and time semantics where needed so outputs are stable and auditable.

- What happens to Hive Metastore tables and partition-based pipelines?

We inventory metastore dependencies, define the Snowflake schema/ownership model, and re-home ingestion into Snowflake-native patterns (staging + COPY, continuous ingest where needed). For incremental workloads, we design explicit dedupe/merge rules so partition overwrite semantics are preserved.

- What happens to UDFs?

We catalog and classify UDFs, translate what is straightforward, and re-implement the rest intentionally (SQL UDFs, external functions, or pipeline transforms). UDFs are treated as first-class migration scope—not “later.”

- How do you validate results are correct after SQL translation?

We use layered validation: compiler/execution checks, then reconciliation checks (row counts, profiling, aggregates by key dimensions, sampling diffs) and golden query parity. Thresholds are defined up front so correctness is measurable and auditable.

- Can we migrate with minimal downtime?

Often yes—via a parallel run window with controlled cutover gates and a rollback-ready plan. The approach depends on batch vs continuous ingest, SLA requirements, and downstream consumer behavior. Cutover is gated by reconciliation thresholds and operational readiness.

| Breakage | Mitigation |

|---|---|

What are the biggest differences between Impala and Snowflake SQL? Impala is tightly coupled to the “lake + metastore” model and inherits many Hive-era behaviors, while Snowflake is a managed warehouse with different type coercion, function behavior, and execution patterns. Performance tuning shifts from partition/file layout and engine stats to micro-partition pruning, (selective) clustering, and warehouse sizing. Reliable migration requires handling semantics—not just syntax. | Assess, rewrite where needed, then validate with parity checks. |

How do you handle casting, NULL behavior, and timestamp edge cases? We translate patterns, then validate with targeted edge-case datasets and golden queries where aggregates and joins are sensitive. We explicitly enforce type conversions and time semantics where needed so outputs are stable and auditable. | Assess, rewrite where needed, then validate with parity checks. |

What happens to Hive Metastore tables and partition-based pipelines? We inventory metastore dependencies, define the Snowflake schema/ownership model, and re-home ingestion into Snowflake-native patterns (staging + COPY, continuous ingest where needed). For incremental workloads, we design explicit dedupe/merge rules so partition overwrite semantics are preserved. | Assess, rewrite where needed, then validate with parity checks. |

What happens to UDFs? We catalog and classify UDFs, translate what is straightforward, and re-implement the rest intentionally (SQL UDFs, external functions, or pipeline transforms). UDFs are treated as first-class migration scope—not “later.” | Assess, rewrite where needed, then validate with parity checks. |

How do you validate results are correct after SQL translation? We use layered validation: compiler/execution checks, then reconciliation checks (row counts, profiling, aggregates by key dimensions, sampling diffs) and golden query parity. Thresholds are defined up front so correctness is measurable and auditable. | Assess, rewrite where needed, then validate with parity checks. |

Can we migrate with minimal downtime? Often yes—via a parallel run window with controlled cutover gates and a rollback-ready plan. The approach depends on batch vs continuous ingest, SLA requirements, and downstream consumer behavior. Cutover is gated by reconciliation thresholds and operational readiness. | Assess, rewrite where needed, then validate with parity checks. |

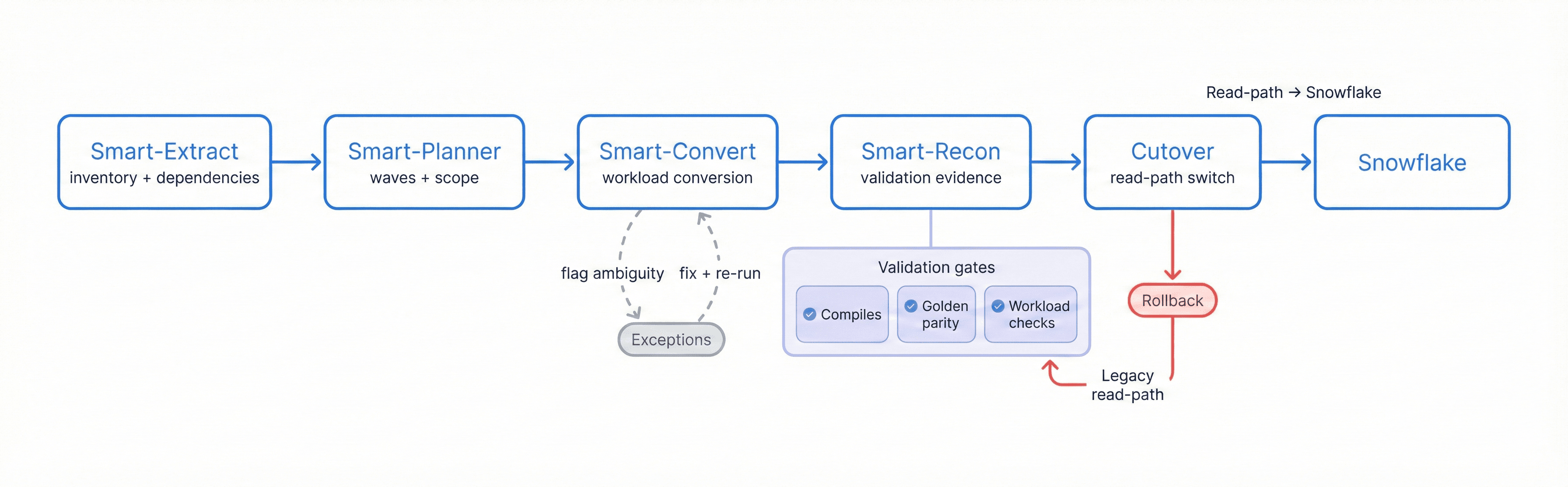

Migration Flow

Extract → Plan → Convert → Reconcile → Cutover to Snowflake, with exception handling, validation gates, and a rollback path

SQL & Workload Conversion Overview

Impala → Snowflake migration is not just “SQL translation.” The objective is to preserve business meaning while replacing Impala’s lake/metastore execution assumptions with Snowflake’s managed warehouse model. SmartMigrate converts what is deterministic, flags ambiguity, and structures the remaining work so engineering teams can resolve exceptions quickly.

What we automate vs. what we flag:

- Automated:

- Common Impala SQL patterns into Snowflake SQL

- Routine DDL mapping (tables/views), basic type mapping, safe function rewrites

- Straightforward join/aggregation conversions

- Baseline view conversion and dependency graphing

- Standard ingestion scaffolding (staging → load) when patterns are deterministic

- Flagged as “review required”:

- Implicit casts and NULL-sensitive logic

- Timestamp parsing, timezone behavior, and string-to-date patterns

- Decimal precision/rounding edge cases

- UDF calls and custom function semantics

- Incremental semantics: overwrite vs append vs merge, dedupe rules

- Performance-sensitive query shapes (skewed joins, large intermediates)

- Manual by design:

- Final ingestion architecture (batch vs near-real-time) and data movement tooling choices

- Incremental strategy (staging + MERGE, streams/tasks, late-data corrections)

- Warehouse strategy (size, auto-suspend/resume, workload isolation, multi-cluster)

- Selective clustering/materialization for hot workloads

- UDF replacement plan (SQL UDF / external function / pipeline transform)

Common Failure Modes

- Implicit CAST and NULL behavior driftImpala/Hive-era coercions (especially strings → numerics/dates) and NULL handling differences silently change filter outcomes, aggregates, and join matches.

- Timestamp parsing and timezone driftLocal-time assumptions and permissive parsing produce off-by-hours/day errors, especially in daily rollups and fiscal calendars.

- Partition overwrite semantics lostPipelines relying on “insert overwrite partition” or directory-level conventions are migrated as append-only, causing duplicates and inflated KPIs.

- Schema evolution surprisesParquet/ORC evolution and “it reads in Impala” does not guarantee clean ingestion without explicit typing and enforcement; fields can shift, widen, or arrive malformed.

- UDF gapsJava/C++ UDF logic is deferred; downstream reports break or produce inconsistent outputs.

- Metastore/lake coupling breaksQueries assume direct access to lake paths and partition folders; without Snowflake-native ingestion + abstraction, workloads become fragile and operationally hard to reason about.

- Performance whiplashQueries tuned for partition pruning and file stats become slow/expensive if micro-partition pruning keys and warehouse sizing aren’t planned.

- Policy translation missedSentry/Ranger-style policies and ACL expectations aren’t mapped to Snowflake RBAC and object privileges; teams either get blocked or over-permissioned.

Validation & Reconciliation Summary

In an Impala → Snowflake migration, success must be measurable. We validate correctness in layers: first ensuring translated workloads compile and execute reliably, then proving that outputs match expected business meaning via reconciliation.

Validation is driven by pre-agreed thresholds and a defined set of golden queries and datasets. This makes sign-off objective: when reconciliation passes, cutover is controlled; when it fails, you get a precise delta report that identifies where semantics, type mapping, or query logic needs adjustment.

Checks included (typical set):

- Row counts by table and key partitions where applicable

- Null distribution + basic profiling (min/max, distinct counts where appropriate)

- Checksums/hashes for stable subsets where feasible

- Aggregate comparisons by key dimensions (day, region, customer/product keys)

- Sampling diffs: edge partitions, late-arrival windows, known corner cases

- Query result parity for golden queries (reports and KPI queries)

- Post-cutover monitoring plan (latency, credit burn, failures, concurrency/warehouse saturation)

Performance & Optimization Considerations

- Pruning-aware table design:

- Align ingest patterns and dominant filters so micro-partition pruning works for the real query mix.

- Warehouse sizing and isolation:

- Right-size warehouses per workload class; isolate BI from ELT/batch to avoid contention and unpredictable latency.

- Auto-suspend / auto-resume discipline:

- Prevent idle credit burn; structure pipelines so compute is on only when needed.

- Selective clustering (when it pays):

- Apply clustering keys only to tables where pruning materially improves repeated hot queries.

- Materialized views and summary tables:

- Stabilize BI workloads and reduce repeated heavy transforms.

- Incremental correctness and cost:

- Design MERGE/dedupe patterns to avoid full reprocessing, especially for late-arriving data.

- Query plan observability:

- Use Snowflake query history/profiles to detect regressions and validate tuning outcomes.

- Join strategy tuning:

- Reduce large intermediates by managing join cardinality, filters, and spill-prone patterns

Impala → Snowflake migration checklist

- Parity contract existsDo you have signed-off golden queries/reports + thresholds (including casting/NULL/time edge cases) before conversion starts?

- Metastore dependency is understoodHave you inventoried Hive Metastore usage (schemas, partitions, external locations) and decided the Snowflake ownership model (databases/schemas, ownership, governance)?

- Incremental semantics are explicitDo you know which workloads rely on partition overwrite vs append, and have you defined Snowflake equivalents (staging + MERGE, streams/tasks, dedupe rules)?

- Schema evolution is handledHave you decided how to enforce types and handle malformed or evolving fields (reject/quarantine, widen types, versioned schemas)?

- UDF and custom logic are in scopeHave you cataloged UDFs and decided how each will be replaced (SQL UDF, external function, pipeline transform, or retirement)?

- Cutover is rollback-safe under real concurrency and costParallel run + canary gates + rollback criteria + Snowflake guardrails (credits, warehouse saturation, query latency, failure rates) are ready.

Frequently asked questions

What are the biggest differences between Impala and Snowflake SQL?+

How do you handle casting, NULL behavior, and timestamp edge cases? +

What happens to Hive Metastore tables and partition-based pipelines?+

What happens to UDFs? +

How do you validate results are correct after conversion? +

Can we migrate with minimal downtime?+

Get a migration plan you can execute—with validation built in. We’ll inventory your Impala estate (including Hive Metastore dependencies, partitioned tables, UDFs, and orchestration pipelines), convert representative workloads, surface risks in SQL translation and type mapping, and define a validation and reconciliation approach tied to your SLAs. You’ll also receive an incremental-load strategy, a cutover plan with rollback criteria, and performance optimization guidance for Snowflake.