Teradata → Snowflake migration

Move Teradata workloads (SQL/BTEQ scripts, macros, stored procedures, volatile tables, and WLM-shaped concurrency) to Snowflake with predictable conversion and verified parity. SmartMigrate makes semantic and performance differences explicit, produces reconciliation evidence you can sign off on, and gates cutover with rollback-ready criteria—so production outcomes are backed by proof, not optimism.

- Scope

- Query and schema conversion

- Semantic and type alignment

- Validation and cutover readiness

- Risk areas

- Teradata-specific SQL semantics (QUALIFY patterns, TOP, set ops, date logic)

- Primary Index, AMP distribution, and stats assumptions

- Volatile tables and session-based workflows

- Deliverables

- Prioritized execution plan

- Parity evidence and variance log

- Rollback-ready cutover criteria

Is this migration right for you?

- You have a mature Teradata estate with hundreds/thousands of queries and BI dependencies

- You rely on macros, procedures, and BTEQ/TPT orchestration in production

- Performance and concurrency predictability matter for SLAs

- You require provable parity and reconciliation evidence before cutover

- You want Snowflake cost governance (credits) and workload isolation from day one

- You’re doing a simple one-time table copy with no semantic dependency on existing queries

- You don’t need reconciliation evidence or rollback guarantees

- There are no critical consumers depending on strict output parity

What breaks in a Teradata → Snowflake migration

- Teradata-specific SQL semantics (QUALIFY patterns, TOP, set ops, date logic)

Snowflake supports modern analytics SQL (including QUALIFY), but edge cases still show up around window frames, ordering stability, NULL handling, date/time rounding, and implicit type coercion—often surfacing as “same query, different KPI.”

- Primary Index, AMP distribution, and stats assumptions

Teradata tuning relies on PI choices, AMP distribution, and collected statistics. Snowflake abstracts storage distribution and doesn’t use the same physical knobs; performance becomes a function of micro-partition pruning, clustering choices (when needed), join strategy, and warehouse sizing.

- Volatile tables and session-based workflows

Teradata pipelines often rely on volatile tables and session semantics. In Snowflake, you typically re-home these to temporary/transient tables, staging schemas, or controlled materializations—otherwise pipelines break or become nondeterministic.

- Macros, stored procedures, and operational SQL (BTEQ/TPT)

Teradata estates are rarely “just SQL.” Macros, stored procedures, BTEQ scripts, FastLoad/MultiLoad/TPT jobs, and scheduler glue must be migrated with an execution plan—or production orchestration fails post-cutover.

- Workload management vs warehouse concurrency

Teradata WLM queues and priorities don’t map 1:1. In Snowflake, concurrency and latency are governed by virtual warehouses, auto-suspend/resume, and (optionally) multi-cluster scaling. Without a plan, BI bursts and batch windows fight each other.

- Hidden BI coupling and “business meaning” drift

Dashboards often depend on undocumented behavior (rounding, casting, ordering, time semantics). If you don’t lock a “parity contract,” correctness becomes a debate at cutover

| Breakage | Mitigation |

|---|---|

Teradata-specific SQL semantics (QUALIFY patterns, TOP, set ops, date logic) Snowflake supports modern analytics SQL (including QUALIFY), but edge cases still show up around window frames, ordering stability, NULL handling, date/time rounding, and implicit type coercion—often surfacing as “same query, different KPI.” | Assess, rewrite where needed, then validate with parity checks. |

Primary Index, AMP distribution, and stats assumptions Teradata tuning relies on PI choices, AMP distribution, and collected statistics. Snowflake abstracts storage distribution and doesn’t use the same physical knobs; performance becomes a function of micro-partition pruning, clustering choices (when needed), join strategy, and warehouse sizing. | Assess, rewrite where needed, then validate with parity checks. |

Volatile tables and session-based workflows Teradata pipelines often rely on volatile tables and session semantics. In Snowflake, you typically re-home these to temporary/transient tables, staging schemas, or controlled materializations—otherwise pipelines break or become nondeterministic. | Assess, rewrite where needed, then validate with parity checks. |

Macros, stored procedures, and operational SQL (BTEQ/TPT) Teradata estates are rarely “just SQL.” Macros, stored procedures, BTEQ scripts, FastLoad/MultiLoad/TPT jobs, and scheduler glue must be migrated with an execution plan—or production orchestration fails post-cutover. | Assess, rewrite where needed, then validate with parity checks. |

Workload management vs warehouse concurrency Teradata WLM queues and priorities don’t map 1:1. In Snowflake, concurrency and latency are governed by virtual warehouses, auto-suspend/resume, and (optionally) multi-cluster scaling. Without a plan, BI bursts and batch windows fight each other. | Assess, rewrite where needed, then validate with parity checks. |

Hidden BI coupling and “business meaning” drift Dashboards often depend on undocumented behavior (rounding, casting, ordering, time semantics). If you don’t lock a “parity contract,” correctness becomes a debate at cutover | Assess, rewrite where needed, then validate with parity checks. |

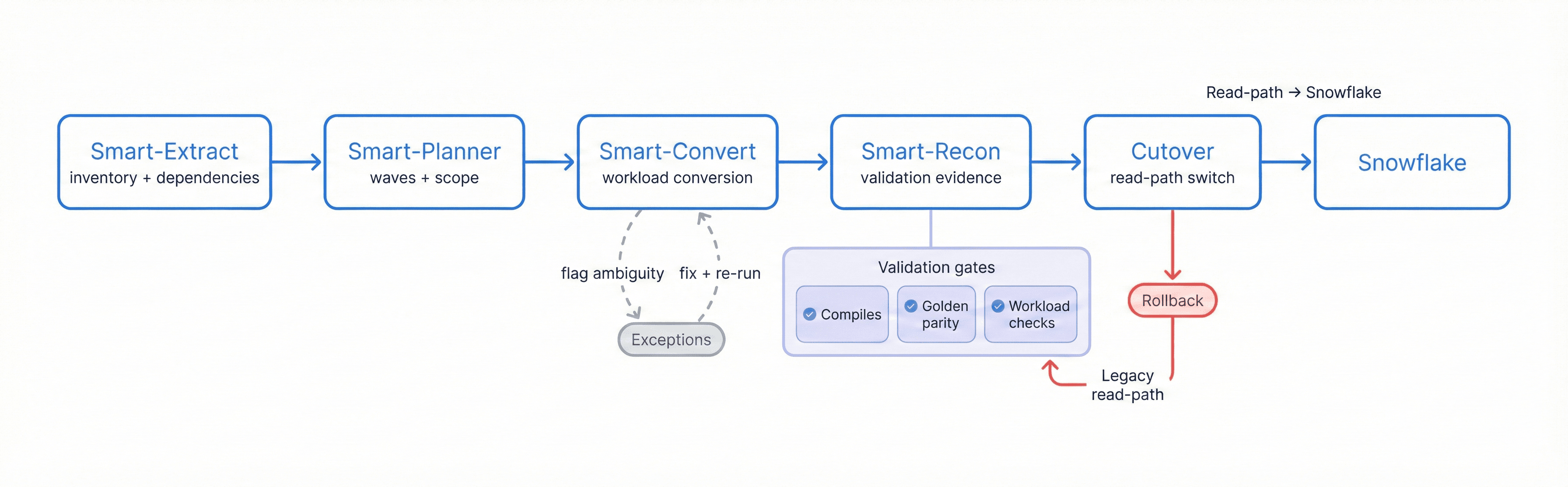

Migration Flow

Extract → Plan → Convert → Reconcile → Cutover to SnowFlake, with exception handling, validation gates, and a rollback path

SQL & Workload Conversion Overview

Teradata → Snowflake migration is not just “SQL translation.” The objective is to preserve business meaning while aligning to Snowflake’s execution model, governance patterns, and operational cost controls. SmartMigrate converts what is deterministic, flags ambiguity, and structures the remaining work so engineering teams can resolve exceptions quickly.

What we automate vs. what we flag:

- Automated: Common Teradata SQL patterns into Snowflake SQL, routine DDL mapping, straightforward join/aggregation conversions, safe function rewrites, baseline view conversion, and standard temp/staging table translations.

- Flagged as “review required”: QUALIFY/window edge cases (frames + ordering), implicit casts and NULL-sensitive logic, volatile-table/session workflows, macros/procedures, transaction-style scripts, and performance-sensitive query shapes.

- Manual by design: Final warehouse strategy (size, auto-suspend/resume, multi-cluster), clustering/materialization strategy for hot workloads, procedure/macro execution design, and operational scheduling patterns.

Common failure modes

- QUALIFY and ranking driftAnalytic filters and window logic translate syntactically but change edge-case outputs (frames, ties, ordering stability).

- PI/AMP assumptions carried overTeradata physical tuning is treated as “schema,” and Snowflake performance degrades because pruning/clustering/warehouse sizing weren’t planned.

- Volatile table workflow breaksSession-based pipelines lose their staging semantics and fail mid-stream (or become nondeterministic under retries).

- Macro/procedure gapsMacros and stored procedures are deferred, and operational workloads break after cutover.

- Load tool mismatchFastLoad/MultiLoad/TPT patterns aren’t re-homed cleanly (stages/COPY/Snowpipe/Streams+Tasks), causing slow loads and fragile backfills.

- Implicit CAST behaviorDifferences in numeric/timestamp casting silently change aggregates and join matches.

- Optimizer expectation surprisesTeradata stats/tuning expectations don’t carry; Snowflake performance requires pruning-aware table design, selective clustering (when warranted), and warehouse right-sizing.

- Cost model whiplashWorkloads tuned for Teradata run “fast” but expensive in Snowflake due to warehouse sizing, concurrency bursts, or long-running transforms without auto-suspend discipline.

Validation and reconciliation you can sign off on

In a Teradata → Snowflake migration, success must be measurable. We validate correctness in layers: first ensuring translated workloads compile and execute reliably, then proving that outputs match expected business meaning via reconciliation.

Validation is driven by pre-agreed thresholds and a defined set of golden queries and datasets. This makes sign-off objective: when reconciliation passes, cutover is controlled; when it fails, you get a precise delta report that identifies where semantics, type mapping, or query logic needs adjustment.

Checks included (typical set):

- Row counts by table and key partitions where applicable

- Null distribution + basic profiling (min/max, distinct counts where appropriate)

- Checksums/hashes for stable subsets where feasible

- Aggregate comparisons by key dimensions (day, region, customer/product keys)

- Sampling diffs: top-N, edge partitions, known corner cases

- Query result parity for golden queries (reports and KPI queries)

- Post-cutover SLA monitoring plan (latency, credit burn, failures, concurrency/warehouse saturation)

Performance optimization in BigQuery

- Warehouse sizing and isolation:

- Right-size warehouses per workload class; isolate BI from batch to avoid noisy-neighbor contention.

- Auto-suspend / auto-resume discipline:

- Prevent idle burn; structure pipelines so compute is on only when needed.

- Pruning-aware table design:

- Organize data (ingestion patterns + filter keys) to maximize micro-partition pruning.

- Selective clustering (when it pays):

- Apply clustering keys only to tables where pruning materially improves repeated hot queries.

- Materialized views and summary tables:

- Stabilize BI workloads and reduce repeated heavy transforms.

- Concurrency controls:

- Use separate warehouses, resource monitors, and (optionally) multi-cluster scaling for predictable peak behavior.

- Query plan observability:

- Use query history/profiles to detect regressions and validate tuning outcomes.

- Join strategy tuning:

- Reduce large intermediates by managing join cardinality, filters, and spill-prone patterns.

Teradata → Snowflake migration checklist

- Parity contract existsDo you have signed-off golden queries/reports + thresholds (including QUALIFY/window edge cases and casting/time behavior) before conversion starts?

- Operational SQL is in scope (not “later”)Do you have a plan for macros, procedures, BTEQ scripts, and load jobs (TPT/FastLoad/MultiLoad) with operational parity?

- Volatile-table workflows are accounted forHave you identified session-based staging patterns and decided the Snowflake equivalents (TEMP/TRANSIENT tables, staging schemas, controlled materialization)?

- Warehouse and concurrency strategy is explicitHave you defined warehouse sizing, auto-suspend/resume, workload isolation (BI vs batch), and whether multi-cluster is needed for peak concurrency?

- Cutover is rollback-safe under real concurrency and costParallel run + canary gates + rollback criteria + Snowflake guardrails (credits, warehouse saturation, query latency, failure rates) are ready.

Frequently asked questions

What are the biggest differences between Teradata and Snowflake SQL? +

How do you handle QUALIFY and window function differences? +

What happens to macros, procedures, and BTEQ/TPT scripts?+

How do you validate results are correct after SQL translation? +

How do you estimate Snowflake cost after migration? +

Can we migrate with minimal downtime? +

Get a migration plan you can execute—with validation built in. We’ll inventory your Teradata estate (including macros, procedures, BTEQ/TPT jobs, and volatile-table workflows), convert representative workloads, surface risks in SQL translation and type mapping, and define a validation and reconciliation approach tied to your SLAs. You’ll also receive a cutover plan with rollback criteria and performance optimization guidance for Snowflake.